Table of Contents

AI-powered polymorphic malware now creates unique, automated threats at machine speed, rendering traditional signature-based detection obsolete.

In 2026, autonomous strains like PromptLock and BlackMamba use Large Language Models (LLMs) to rewrite their code in real time, exploiting vulnerabilities before human-led security teams can react. This shift has transformed reaction time into the defining variable of cybersecurity.

Legacy response workflows are currently too slow to stop AI agents that are weaponizing new Common Vulnerabilities and Exposures (CVE) entries in minutes.

Organizations must move from manual triage to automated response and real-time intelligence dissemination. This advisory examines the mechanics of these AI threats and outlines a transition to behavioral-based, machine-speed defense.

Quick Facts: AI Malware and Machine-Speed Defense

|

How Has AI Transformed the Creation of Modern Malware?

AI transforms malware from what may be a manageable nuisance into an autonomous force. While polymorphic malware has existed for decades, modern AI allows attackers to produce hundreds of unique threats every hour. This shift makes traditional signature-based defenses ineffective and gives the advantage to the attacker.

Accessibility is the catalyst for this change. Tools like WormGPT and FraudGPT are unfettered LLMs available on the dark web for as little as $220. These tools democratize offensive capabilities by removing the need for deep coding expertise.

According to SecurityWeek’s Cyber Insights 2026 report, 41% of ransomware families now use AI for dynamic behavior modification. Attackers use these models to obfuscate payloads and craft exploits from fresh vulnerabilities in minutes. This allows for the automation of full attack chains with minimal effort.

AI collapses the time between vulnerability discovery and weaponized exploitation to near-zero. Autonomous AI agents now orchestrate intrusions at a machine pace that far outstrips traditional threat actors. Every variant features unique hashes and execution flows even when they share the same malicious intent.

How Does AI-Powered Polymorphic Malware Evade Traditional Security?

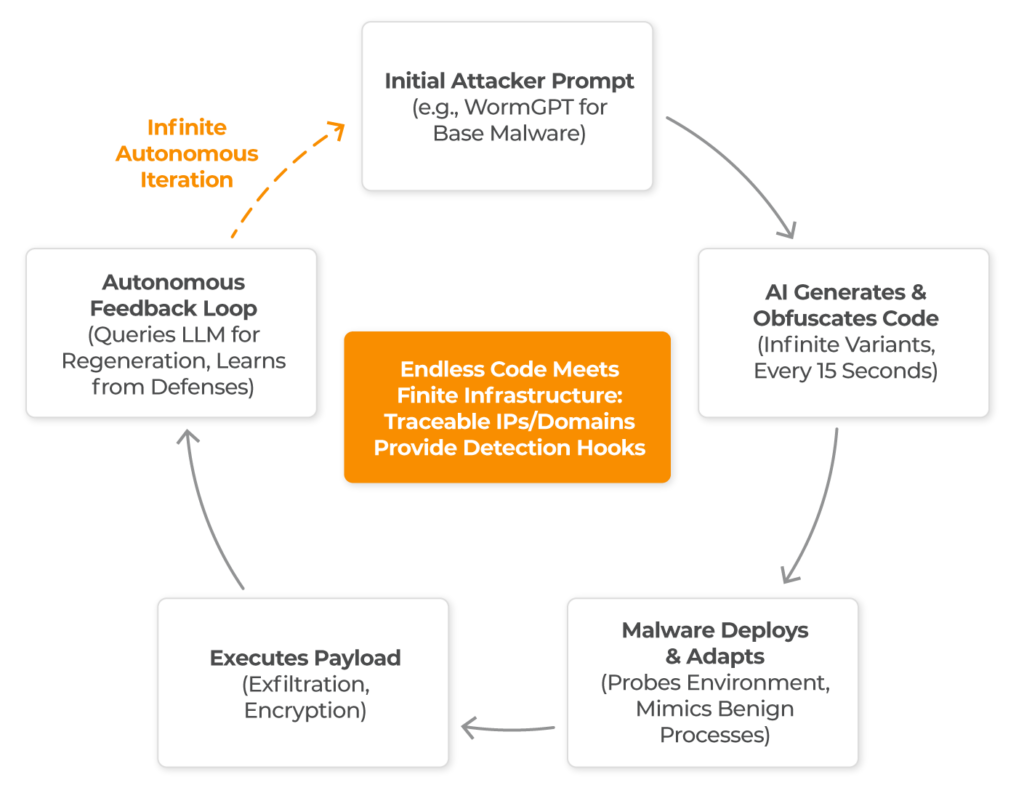

AI-powered polymorphic malware evades security by using LLMs to constantly rewrite its own source code. This process makes the malware invisible to traditional tools that look for known file patterns. The malware’s constant mutation forces a shift from identifying known bad files to analyzing real-time behavior.

These threats use four interlocking capabilities:

Code Generation and Obfuscation

Models reorder instructions and rename variables to hide intent. BlackMamba, for instance, uses Python’s exec() function to run AI-generated code directly in memory, leaving no forensic footprint on the disk.Dynamic Runtime Adaptation

Strains like PROMPTFLUX query AI models during execution to fingerprint the target environment, adapting instantly to bypass specific endpoint security tools, like EDRs.Embedded LLM Integration

Malware now communicates with local AI models via APIs like Ollama. PromptLock generates new scripts for scanning and encryption with every cycle, ensuring no two executions are identical.Semantic Evasion and Autonomy

Evasion has shifted from hiding code patterns to mimicking legitimate behavior. AI generates ‘clean’ code that mimics legitimate system calls. In 2026, this includes the abuse of antivirus tools to deliver hidden payloads without human oversight.

The result is devastating for traditional signature-based detection or any response workflow that depends on human review or alert correlation. Detection rates for AI-modified variants have dropped to approximately 61% in controlled testing. Dwell time now averages 276 days, meaning organizations often remain compromised for over nine months.

When an AI agent can pivot through a network in seconds, a response process measured in hours or days is equivalent to no response at all.

Why Is Infrastructure the Weak Link for AI Malware?

While AI generates infinite code, the digital infrastructure used to launch attacks remains finite and traceable. Attackers can change code in seconds, but they cannot easily hide the physical IPs and domains required for command and control.

AI-driven variants trend toward infinity because there is no limit on generating code variations. However, the story is different for the infrastructure that supports those attacks. Elements like IP addresses and domains are anchored in physical reality. The IPv4 address space is finite.

Acquiring new infrastructure carries tangible costs in time and money. Domain registration leaves trails through WHOIS records and registrar logs. Even with specialized hosting and complex DNS techniques, attackers cannot spin up infinite infrastructure without increasing exposure.

Chasing individual file hashes in an AI-powered landscape is futile. Correlating infrastructure indicators remains a powerful strategy. These include IP ranges, domain patterns, and TLS certificate fingerprints.

Which Active AI Threats Are Surfacing in 2026?

In 2026, autonomous malware has moved from research to global campaigns. The following threats are currently active with Indicators of Compromise (IoCs) surfacing in global intelligence feeds:

PromptLock Ransomware

A cross-platform Golang threat that uses the Ollama API to generate dynamic Lua scripts. Its reliance on specific API endpoints creates traceable anchors for defenders.

BlackMamba Keylogger

Fetches AI-generated code at runtime and exfiltrates data via Microsoft Teams. Despite its polymorphic nature, it shows consistent patterns in domain reuse.

LAMEHUG and PROMPTFLUX

Families linked to fully autonomous attack chains that conduct reconnaissance and select targets without human involvement.

MalTerminal

A GPT-4 derivative generating on-demand ransomware. While it produces infinite variants, its infrastructure footprint provides reliable hooks for threat intelligence.

Research from Anthropic shows that autonomous AI agents can now deploy thousands of personalized phishing emails per second. They can also generate functional ransomware in under a minute. These capabilities demand an automated, machine-speed response.

How Do AI Threats Map to the MITRE ATT&CK Framework?

Mapping AI-enhanced threats to the MITRE ATT&CK framework allows teams to align detection rules with machine-speed tactics.| Technique ID | Name | AI-Enhanced Context |

| T1588.007 | Obtain Capabilities: AI | Acquiring or accessing LLMs for reconnaissance, script generation, and payload creation. |

| T1027 | Obfuscated Files or Information | AI-mutated code using dead code insertion, variable renaming, and control flow alteration. |

| T1566 | Phishing | AI-personalized lures with contextual social engineering, including deepfake audio and video. |

| T1059 | Command and Scripting Interpreter | Dynamic script generation via local or remote LLM endpoints (e.g., Ollama, custom APIs). |

| T1003 | OS Credential Dumping | Memory injection techniques that evade signature detection but trigger behavioral alerts. |

| AML.T0054 | LLM Jailbreak (MITRE ATLAS) | Bypassing AI model safeguards to generate malicious content or enable offensive operations. |

| T1078 | Valid Accounts | AI-automated credential testing and session hijacking at scale. |

How Can Organizations Survive the Era of AI Malware?

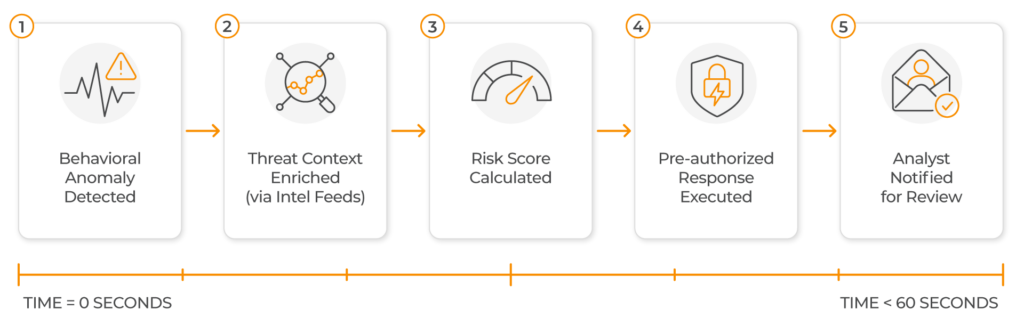

Surviving the AI malware era requires a three-pillar strategy that focuses on behavior, automation, and real-time intelligence. This approach aligns with a Zero Trust architecture, where security teams must assume breach and verify every digital interaction regardless of its origin.

Pillar 1: Behavioral Detection

Behavioral detection asks if activity is consistent with legitimate operations rather than searching for known code patterns.

- Establish dynamic baselines for network traffic and authentication.

- Identify deviations, such as unusual API calls to LLM endpoints or data movement to new domains.

Pillar 2: Automated Response

Automation must move from reactive alerts, still requiring human action, to proactive containment that executes at machine speed.

- Implement pre-authorized playbooks for endpoint isolation and credential revocation.

- Reduce your attack surface by restricting unnecessary AI API access from production environments.

Pillar 3: Real-Time Intelligence

Threat intelligence loses value quickly in an environment where AI weaponizes vulnerabilities in minutes. Organizations need platforms that ingest and disseminate threat data continuously.

- Enable real-time intelligence feeds, rather than in daily batches.

- Prioritize infrastructure-level IoCs, IP ranges and domain clusters, which retain value longer than file hashes.

How Should Your Organization Defend Against AI Malware?

Malware can now be generated faster than it can be named or categorized. Organizations relying on signature detection and human-mediated response will find themselves perpetually behind, analyzing artifacts that have already mutated.

By focusing on behavioral detection and real-time intelligence and response, organizations can neutralize AI threats that move too fast for manual review. Defenders who do this will build resilient operations and set the standard for effective defense in the age of AI.

Find out more about how Lumu can help you embrace automated machine-speed security by registering for a live demo today.