The emergence of artificial intelligence as more than a science fiction trope is understandably exciting. Predictions range from “AI will uplift humanity and open up new industries”, to “AI will take all our jobs”, to Terminator-style extermination of humans. Whatever your stance, there is no denying that AI is a powerful tool. But like any tool, the key is how you use it.

Just a Buzzword?

‘AI’ and ‘Machine Learning’ have been heard enough times on conference floors to make some dismiss them as the newest cybersecurity buzzwords. These technologies offer immense value, but expectations need to be tempered. The techniques referred to under the umbrella of artificial intelligence do not form a ‘general’ intelligence—yet—but are in fact advanced mathematical and statistical models. When these tools are cleverly used together, they let us compute tasks previously reserved for human minds as well as some tasks that will always be out of reach for mere mortals.

AI holds several advantages that can be realized in cybersecurity. There are undeniable economies of scale that come with automation. Modern computing can run many more analyses than human minds, at a fraction of the cost. Machine learning algorithms can identify patterns and correlations across many datasets being updated in real time. These abilities can be applied to the prioritization of tasks, making workers more efficient. The applications are endless.

Design That Takes Limitations Into Account

As a new technology, engineers are still learning about how to best implement and optimize these techniques. There are important limitations to consider. For example, some analytical procedures take a very long time to train. While a mature machine learning-enabled system will deliver impressive results, initially it will provide many false negatives and other inaccuracies. Creating separate analytical systems might be the best choice for certain processes, but these learning curves can cause problems. Some vendors expose their customers to the early stages of this learning curve, causing many false alerts and headaches for the end-user.

Many of the techniques and procedures are only effective when applied in narrow situations, causing problems when applied to general real-world problems. In one example, a man was denied entry to his own home by his ‘smart’ front door. The problem? He was wearing a Batman t-shirt. The facial recognition software recognized the caped crusader as a masked intruder, promptly barring entry. When misused, AI will deliver a multitude of similar false-positives, causing more pain than relief in the hands of a security analyst.

The Need to Incorporate Humans in AI Design

Such is the industry’s craze for artificial intelligence, that some vendors are being impelled to embrace it, often with undesirable effects. In some cases, end-users are being granted the ability to change parameters and configurations. When those users are not trained in the intricacies of the analytical model at hand and don’t understand it completely, the results can be highly counterproductive. In other cases, the raw results produced simply overwhelm the user.

There is also a misconception that AI will be autonomous after its initial implementation. In fact, automation engineers need to be retained for the maintenance of such systems. These workers have the most in-demand skills in any industry. There is already a large skills shortage in cybersecurity, making it exceedingly difficult to find and retain talent that incorporates both skill sets.

Even with the current state of the art, 100% accurate prediction is unreachable, meaning that a human will always have the final say over its results. The implementation of AI will need to incorporate that it is not an infallible autonomous ‘being’ that replaces the end-user. Rather, it is a tool at the hands of an end-user: a powerful tool, but a tool nonetheless.

A Tool Like Any Other

“When the only tool you have is a hammer, everything looks like a nail.” The understandable excitement over artificial intelligence and machine learning, unfortunately, has many people seeing nails everywhere. Far too often the first question being asked is which AI techniques are being used. This is viewing AI as being the end, when in fact it is the means. Rather, we should be asking ourselves if we are implementing AI to realize efficiencies and deliver the best possible product to end-users. We must make sure that AI is indeed working for us, rather than us working for it.

The most effective present-day examples of artificial intelligence are those designed for minimal user involvement. Technologies such as facial recognition, content suggestions, and automated driving retrain themselves in the background. They do not ask the end-user to define constants and thresholds. Implementing AI in this way ensures that it works for the user, often without the user even realizing it’s there. Why should it be any different in cybersecurity?

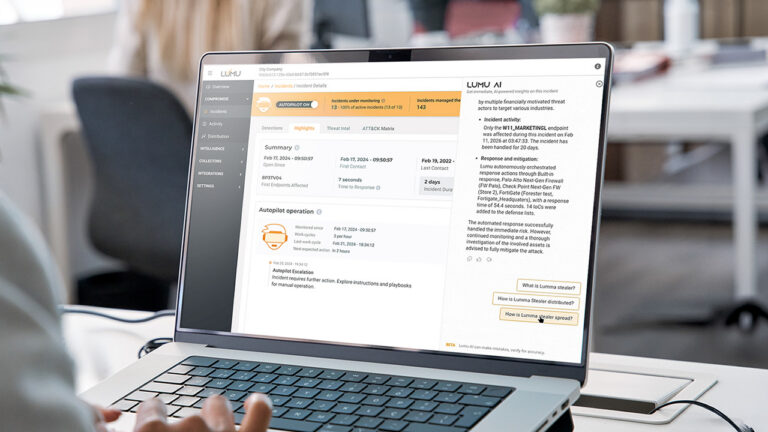

At Lumu, we understand the value of artificial intelligence techniques, but also the importance of designing systems taking into account its limitations and most importantly, the end-user. For us, AI is just one cog in the elegant machinery that we call the Illumination Process. We built this process from the ground up to answer the most important questions in cybersecurity and deliver those answers to our customers in a user-friendly manner without installation and maintenance headaches.